3 Conversion Rate Optimization Myths Debunked

Landing page optimization is all the rage. With clients continuing to increase their investment in online media, converting more of your clicks into new leads or paying customers has never been more important. While it’s great to see more people testing and more people aware of the importance of optimization, I’ve also noticed that all the hype around optimization has caused some “myths” to become popular among online marketers.

In this article, I’ll debunk 3 common conversion rate optimization myths and give you some best practices that you can apply to your future testing.

1. Statistical Significance Means Revenue

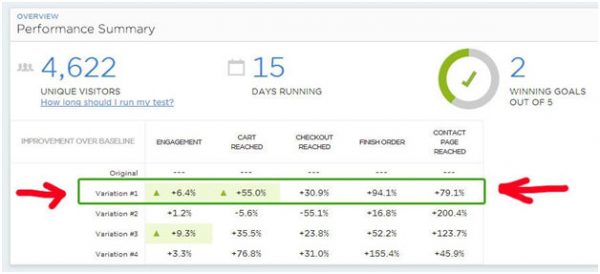

When you’re finished running a test using Visual Website Optimizer, Optimizely, or any other A/B testing platform, you’re going to get a summary of the results of your experiment that looks something like this:

A summary of some A/B testing results in Optimizely.

Great! You nearly doubled the amount of order finishes by 94%. And better still, you’ve determined that you likely beat your winner at a statistical confidence level of 95%.

So what now? Are you going to see twice as much revenue immediately? The answer is, you’ll likely see a revenue increase for this dramatic an improvement, but don’t be surprised if you don’t see an uplift that gets you the same percentage increase in revenue. Why is this possible?

- Traffic Sources Change: Most websites – and especially new websites – have lots of variation in their traffic sources. For example, you may one day get a spike in traffic from Twitter or Pinterest, or some other “pick up”. Remember that the conversion rate increase that you saw was for a set period of time and a set period of traffic. If your traffic changes, you can’t guarantee that your new landing page or website is going to perform as highly.

- Website Performance Fluctuates: Website speed affects conversion rate and despite your best efforts, your website will fluctuate in performance to different users. This can sometimes explain why a new site performs better or worse than testing.

- There’s Still a Chance It’s Not Significant: Many people think that a 95% or even a 99% confidence interval will mean that you have a very good “chance” that you beat your baseline conversion rate. But, remember that, by definition, you could be part of that unlucky 5% or 1% chance that doesn’t actually see an uplift in conversion.

As a general recommendation, I recommend pushing tests live for a minimum amount of time rather than a level of statistical significance to ensure that you are actually seeing higher revenue and stronger results. This should take care of most of the issues around traffic fluctuation and give you a more accurate measure of what your lift is going to be.

2. Small Changes Will Lead to a Massive Difference

One of my biggest frustrations with the current marketing campaigns from most CRO firms and CRO software providers is that they make it appear that small tweaks in a website’s design can make a massive difference.

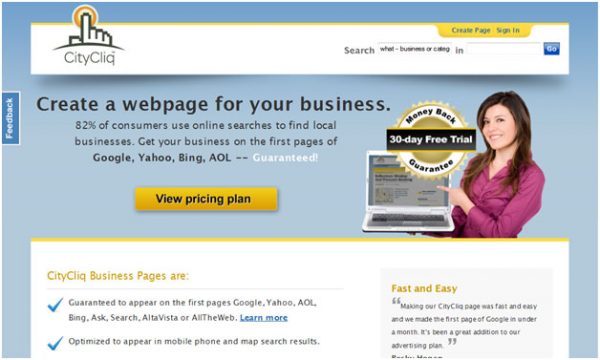

Here’s an example of what I mean – VWO published this case study showing how a single headline change led to a 90% improvement in conversion rate.

Case Study by Visual Website Optimizer

The headline “create a webpage for your business” beat the original headline “businesses grow faster online” by 90%. There’s no question that this is a great test with great results, and my hat is off to the team that executed this. But, I want to again point out some caveats with this based on my experience with testing:

- Headline Changes Mostly Affect Conversion by 5-10%: All you are doing here is changing one piece of text – you’re not doing anything drastic that “warrants” this high of an improvement in conversion rate. So, while it’s possible you’ll see a dramatic impact like this, don’t expect to get these dramatic results from early testing.

- Drastic Redesigns Often Double Conversion: In order to double your conversion rate, you normally need to take “risk”. This means investing time building a drastic redesign of your site with new layout, colors, images, etc.

If you are to do headline changes or changes to the CTA (Call to Action) first, running a multivariate test might be the best way to go to go after the “low hanging fruit” in your landing page. But, don’t expect that changing a single element can improve conversion so dramatically.

3. Static Pages Lead to Maximal Conversion

The overwhelming majority of websites today are static. They show the same experience to the same visitor – maybe with some customization (logged in, logged out, etc) – but generally the same.

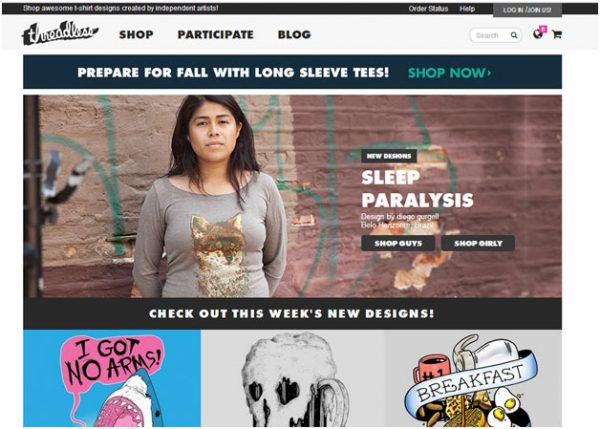

When customers test – especially e-commerce clients – they are mostly testing the impact of static changes on design. Let’s use Threadless as an example:

The homepage of Threadless.

Many e-commerce clients we work with, who have done some basic A/B testing in the past, would first prioritise static elements, for example:

- Landing Page CTA: “Prepare for fall with long sleeve tees” – is this ideal? If not, what is the perfect message here?

- Landing Page Image: A male or female? Bright or dark colors?

- Designs on Homepage: Which should you be featuring?

Even if you were to maximize this, however, you would still be missing the bulk of the opportunity. Here are some better questions to ask yourself:

- What products do you feature on the homepage depending on what someone searched for? How do you know immediately what product to deliver to what customer?

- What experience should you deliver to new vs existing customers? Will repeat buyers need the same offers to get them to convert – such as an offer for free shipping? Will repeat customers need a wider or a shorter selection of products?

- What is the likely age/gender of your customer? You should be able to show different images/colors depending on the age and gender of your customer. For example, 25 year old males will likely respond to a picture of a similar male wearing a shirt rather than a female (and females likely will go the other way)

Personalizing your site will drive the highest lift. Static personalization will only get you so far – once you have completed basic A/B and multivariate testing to determine what static changes will make the largest difference, you’re not done! You need to invest the time to personalize your site to get the total maximal lift.

Summary

Be sure to measure your real revenue lift rather than simply relying on statistics. Don’t expect simple changes to make a massive impact. And make sure you personalize your site once you’ve finished with static testing. What other CRO myths have you found that haven’t been true?

No responses yet to “3 Conversion Rate Optimization Myths Debunked”